As humanity continues to push further into space, the challenges we face are no longer just technical; they are deeply political, social and ethical. Earlier this year, I had the privilege of being selected as one of the youth video competition winners for UNIDIR’s Outer Space Security Conference 2025. Participating in this global forum offered valuable insight into how policymakers, scientists, civil society and diplomats are addressing the growing complexities of orbital security. One key takeaway for me was the urgent need to bridge technical innovation with ethical responsibility, ensuring that as we integrate AI into space systems, we do so with transparency, fairness and international cooperation at the core.

My contribution to this issue was a speculative scenario that imagined how our choices could shape the future. The scenario I created, “Dispatch from 2050”, explored how African-led institutions, youth-driven innovation and ethical AI could play a critical role in maintaining orbital safety. At the heart of these issues lies a fundamental question: how do we ensure that the tools we build to safeguard space do not become sources of division or conflict? This question inspired the creative exercise that follows.

In the “Dispatch from 2050” scenario, a critical incident unfolded when a privately operated constellation and a State-led constellation entered conflict over contested frequency bands. Their automated systems, designed to respond independently to perceived threats, initiated a series of uncoordinated manoeuvres. These movements placed both networks on a trajectory that could have resulted in a catastrophic chain reaction of collisions.

To address these mounting risks, African institutions had helped establish the Lusaka Protocol code 101e in 2047, a multilateral agreement aimed at regulating AI assisted decision making in orbit. The Lusaka Protocol code 101e emerged from years of growing concern that existing space governance instruments were ill equipped to manage the rise of autonomous decision making in orbit.

During the crisis, a youth-developed AI system at the Lusaka Orbital Institute detected irregular movement patterns earlier than any human operator could. It predicted the likelihood of a collision and triggered an alert under the Lusaka Protocol code 101e. In response, an Emergency Orbital Hold was activated, freezing high-risk trajectories long enough to prevent immediate impact.

This scenario, though speculative, reflects trends that are already emerging today. Research shows a rapid expansion of mega-constellations and increasing congestion in low Earth orbit (LEO), raising concerns about collision risks and frequency interference. Participating in the discussion around space policy and security initiatives firsthand has shown me that managing space security challenges requires more than advanced technology. It demands foresight, coordination, and inclusive governance frameworks that allow countries, private operators, and even youth to collaborate rather than compete in ways that could escalate into crises.

Ethical AI and governance in Earth’s orbit

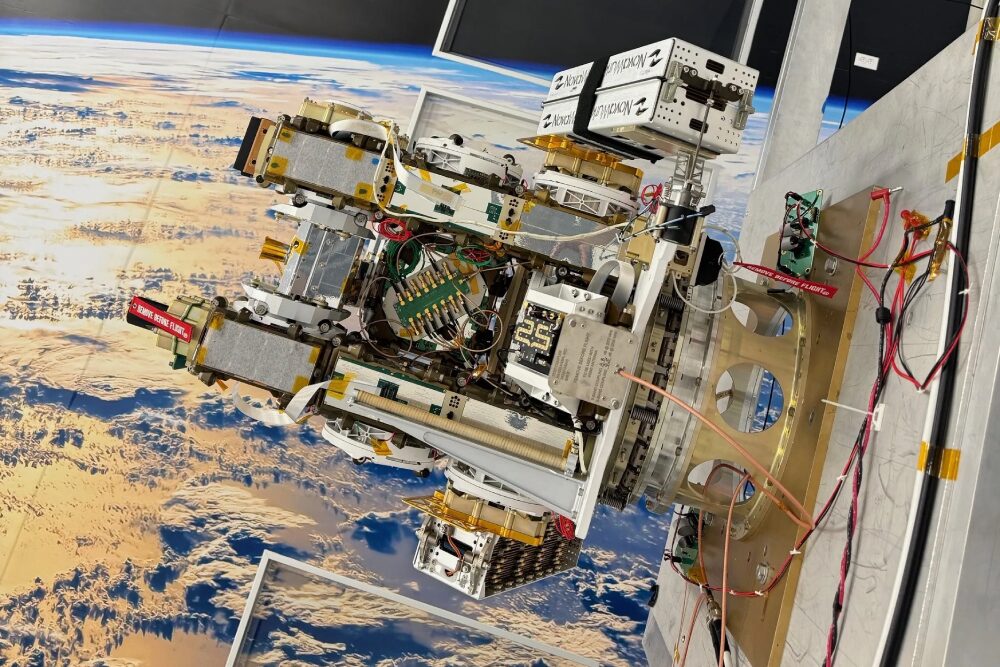

The proliferation of satellites has transformed LEO into one of the busiest environments managed by humankind. Mega constellations, numbering in the thousands of satellites, are redefining connectivity, but also magnifying risks. Frequency interference, orbital crowding, and cascading collision hazards now pose systemic challenges.

Artificial intelligence is increasingly deployed to monitor orbital traffic without continuous human intervention, predict collisions, and optimize frequencies. AI-driven conjunction assessment systems can generate earlier and more precise collision warnings, allowing operators to plan avoidance manoeuvres with reduced fuel costs and minimal disruption to satellite services.

For instance, private companies like Leo Labs use AI-powered radar systems to track thousands of objects in LEO, enabling rapid detection of potential collisions. Intergovernmental and national space agencies, such as the European Space Operations Centre and NASA, also employ AI algorithms to optimize satellite constellation management and reduce congestion risks. These innovations illustrate thatfaster, more accurate monitoring can be an opportunity to prevent accidents, maintain the reliability of satellite services, and support global connectivity. However, risks arise when different operators’ AI systems act independently, potentially leading to uncoordinated manoeuvres.

The autonomy of these systems also raises new dilemmas. Automated collision avoidance systems operating without shared coordination frameworks may respond to the same perceived challenge in conflicting ways, increasing the chances of secondary conjunctions. Another thing to consider is that private algorithms might determine orbital priorities without human oversight, creating opaque decision making that could undermine coordination and safety.

Current legal and normative frameworks, such as the Outer Space Treaty, provide broad principles of peaceful use but do not explicitly address AI-driven decision making. As autonomous systems, including AI-enabled ones, take on operational control in orbit, new governance tools will be needed. The imagined Lusaka Protocol code 101e offers one such conceptual solution, anchoring decision making in ethical AI design, transparency and inclusive diplomacy.

African institutions are beginning to explore solutions in this space. For example, the South African National Space Agency is developing AI- and data-driven tools for space situational awareness, including monitoring orbital debris and supporting national and regional satellite operations. In academia, the University of Cape Town is conducting research into AI applications for satellite traffic management, while private startups in Nigeria and Kenya are exploring small satellite constellations with integrated AI for improved frequency coordination and orbital safety. These initiatives demonstrate the potential for African-led contributions to global space governance. This fills an important knowledge gap and ensures that African perspectives are represented in emerging norms and standards.

Taken together, these examples reveal that the challenge posed by AI in orbital management is not just technological capacity but governance alignment. While AI systems can significantly enhance safety, efficiency and sustainability, their benefits depend on coordination, transparency and shared rules of engagement among operators. Without common standards for data sharing, decision-making logic, and human oversight, autonomous systems risk reproducing fragmentation in orbit. This dynamic is particularly consequential for emerging space actors, as unequal access to data, infrastructure and governance forums may reinforce existing power asymmetries.

International dialogue is evolving to address these issues. For example, the UN Office for Outer Space Affairs has begun exploring the responsible use of emerging technologies such as AI in relation to space. The 2023 REAIM Call to Action, which received wide international support, underscores the global commitment to responsible AI use in the military domain. Furthermore, the OECD Recommendations on AI provide guidance on how to improve trustworthiness in AI systems. They offer a useful framework for assessing future AI-enabled orbital management systems, particularly in relation to the transparency of automated decisions, accountability for harm, and preservation of human control over safety in critical domains.

In the African context, scholars are beginning to explore how indigenous ethical systems, such as Ubuntu, could influence AI ethics by emphasizing communality, interconnectedness and shared responsibility. Such contributions show the need to define and operationalize African perspectives within AI policy frameworks.

Building the future we imagine

The imagined orbital crisis of 2050 might seem distant, but the seeds of prevention must be planted now. Governance of AI-driven decision-making systems in space remains underdeveloped. Recent research highlights how governance of AI-enabled space technologies is often reactive, with policy frameworks emerging only after risks or crises materialize. These frameworks should instead employ foresight, human oversight, and accountability at the design stage. This would ensure that systems managing space assets reflect collective human values.

In my “Dispatch from 2050” fictional scenario, the Lusaka Protocol code 101e was not written by domain experts alone, but together with storytellers, elders, scientists, and youth from Lusaka. The future of space governance must be inclusive. Historically, decisions about space exploration have been concentrated among a few nations. The UN Space 2030 Agenda and the African Union’s Space Policy and Strategy demonstrate growing recognition of the Global South’s role in shaping the future of space.

Africa, in particular, has shown leadership through data-driven projects in Earth observation, climate monitoring, and satellite innovation. Zambia’s increasing participation in technology innovation highlights the transformative power of youth-led research and policy development. Ethical AI systems developed by African institutions can ensure that space technologies serve developmental goals improving agriculture, education and disaster response while aligning with local values and human rights.

Internationally, instruments like the envisioned Lusaka Protocol code 101e could formalize ethical obligations, much as the Paris Agreement did for climate. At UNIDIR’s Outer Space Security Conference 2025, Zhanna Malekos Smith emphasized how data ethics underpins responsible governance in emerging technologies, providing a concrete example of how ethical practices in AI and data management can strengthen trust and accountability in space operations. Just as physical debris threatens satellites, ethical neglect threatens the stability of governance.

The 2050 vision where inclusively constructed AI systems protect Earth’s orbit may seem aspirational, yet it is built on principles we can adopt today. The fictional Lusaka Protocol code 101e reflects the real potential of collaborative, human-centred innovation. If we succeed, the Lusaka Protocol code 101e of tomorrow will not be fictional, but a living embodiment of a world that chooses dialogue over dominance, inclusion over isolation, and ethics over expediency. In the end, space security is not about protecting satellites, it is about protecting our shared future.

Kondwani Mbale is an Artificial Intelligence student at the Specialized Institute of Applied Technology — City of Trades and Skills. His work focuses on computer vision, data analysis and intelligent systems. He has participated in international initiatives, including the ICANN80 NextGen programme and the FIRST Global Challenges, and is a laureate of the International Youth Competition of Scientific and Sci-Fi Works.

This commentary is a special feature of UNIDIR’s Youth Engagement initiative. The author, Kondwani Mbale, was selected as a winner of the Outer Space Security Conference 2025 Youth Campaign. The views expressed in the publication are the sole responsibility of the individual author and do not necessarily reflect the views or opinions of the UN, UNIDIR nor their staff members or sponsors.